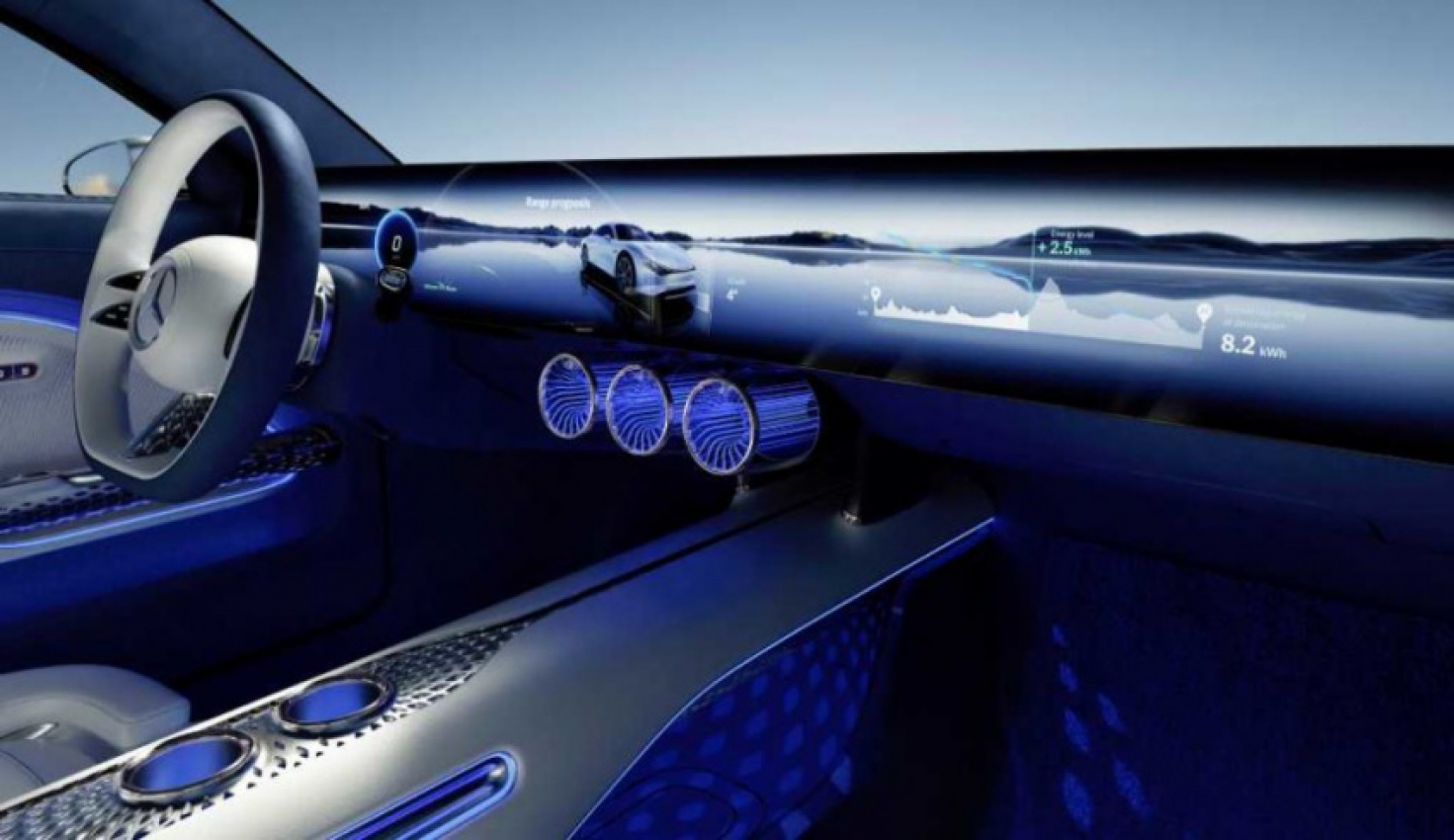

Despite being revealed on 4 January, the Mercedes Vision EQXX will likely prove to be one of the most exciting concept cars of 2022.

The German brand promised big – it could travel more than 1,000 km range on a single charge while using less than 10 kW per 100 km. It even had a super-slippery drag coefficient of just 0.17 – while other very aerodynamic cars such as the Tesla Model S and Lucid Air can only manage 0.2.

However, it wasn’t simply the design and performance of the Vision EQXX that caught the eye. It also had a new and innovative approach to in-car infotainment and voice control. Sonatic, the company behind the AI promised “emotion, expression, and empathy” from the in-car voice assistant. Auto Futures has been catching up with Mercedes’ Nils Shanz and Sonatic’s Zeena Qureshi to find out more.

Finding a Common Voice

“Sonantic was approached by Daimler, the parent company of Mercedes-Benz, to partner with their Speech Technology & Dialogue Design teams on the Vision EQXX,” says Qureshi, Sonatic’s CEO.

“At Sonantic, we have spent years developing our proprietary voice platform, which creates the highest quality AI voice models that can express the full range of human emotions. This partnership represents an exciting intersection, where the future of cars meets the future of AI voice tech.”

The Vision EQXX’s voice control system, which drivers can wake up by saying “Hey Mercedes,” is designed to make interacting with the car feel more intuitive.

“The AI of MBUX – our innovative multimedia system Mercedes-Benz User Experience – will learn over time the interactions of the customer with the MBUX-system itself,” explains Schanz, who is responsible for user interaction and voice control at Mercedes-Benz Cars.

“Based on this individual usage we configure the models and deliver proactive and contextual recommendations. All the user-based data will only be used inside the vehicle.”

Voice control might have become a fixture of many homes around Europe and America, with millions of people talking to Alexa, Google Assistant, and Siri every day. But, in the world of cars, it remains relatively uncommon.

However, with many new models eschewing knobs and buttons to control everything from air conditioning to the radio and – arguably becoming harder to use as a result – voice control is becoming an efficient solution.

“The AI of MBUX – our innovative multimedia system Mercedes-Benz User Experience – will learn over time the interactions of the customer with the MBUX-system itself,” explains Schanz.

“Based on this individual usage we configure the models and deliver proactive and contextual recommendations. All the user-based data will only be used inside the vehicle.”

Developing the EQXX’s Voice

The days when sat nav manufacturers would happily sell you a downloadable version of Snoop Dogg’s voice to guide you around are long gone.

For the Vision EQXX, Sonatic building the voice involved a lot of tech.

“We do not require big recording sets since we focus on the quality of speech and how to capture the nuances, like breath, pitch and emotion,” explains Qureshi.

“Sonantic has a two-part approach: on one side we have a proprietary Voice Engine that focuses on all the aspects of human speech that makes a voice sound realistic such as accents, pitch, pacing, tone and emotions.”

However, according to Qureshi, it isn’t all computers.

“On the other side, we partner with talented voice actors who train their own AI to match their performances.”

Of course, speaking to a disembodied computerised voice while sitting in a luxury vehicle like the Vision EQXX isn’t perhaps the best experience.

“Everyone can tell when they are speaking to an automated system,” says Qureshi. “We believe with expressive AI voice technology, in-car assistants can be transformed into comforting companions.”

“Drivers have come to rely on in-car assistants for a variety of tasks, and we believe that hyper-realistic voice interactions foster a deeper connection with end users, improving the mood and overall experience.”

This, perhaps, will make talking to the Vision EQXX less like dealing with the annoying voice control in your phone and more like talking to an old-fashioned chauffeur – like a disembodied Parker from Thunderbirds.

“The sound of the voice assistant is a very important aspect for the luxury feeling of such a system,” says Schanz.

“The goal was to have a speech synthesis system integrated, that sounds as natural as a human voice. By adding the possibility to react to system states or behaviour in a more emotional way (e.g. speaking neutral or impressing happiness), we aim to build the most natural voice assistant you can experience in cars.”

Building the Vision

There is, of course, one large elephant in the room. The Vision EQXX is a concept car – and isn’t slated for production.

So, is has this all been an academic exercise with no bearing on reality? Not quite.

“The Vision EQXX is a new technology blueprint for series production in many ways,” says Schanz.

“We started some years back with integrating AI in our MBUX System (e.g. predicting calls or commuting routes). We continuously will improve the MBUX system, especially the MBUX Voice Assistant, by integrating the latest AI and machine-learning technologies and bringing the UX to the next level.”

It’s better then to think of the Vision EQXX as a rolling testbed for Mercedes’ future cars and, with the German giant rolling out electric cars with increasing regularity, you can expect to see more drivers chatting away to their voice assistants in the years to come.

Keyword: How Mercedes Built the Vision EQXX’s Voice Assistant with Empathy and Emotion